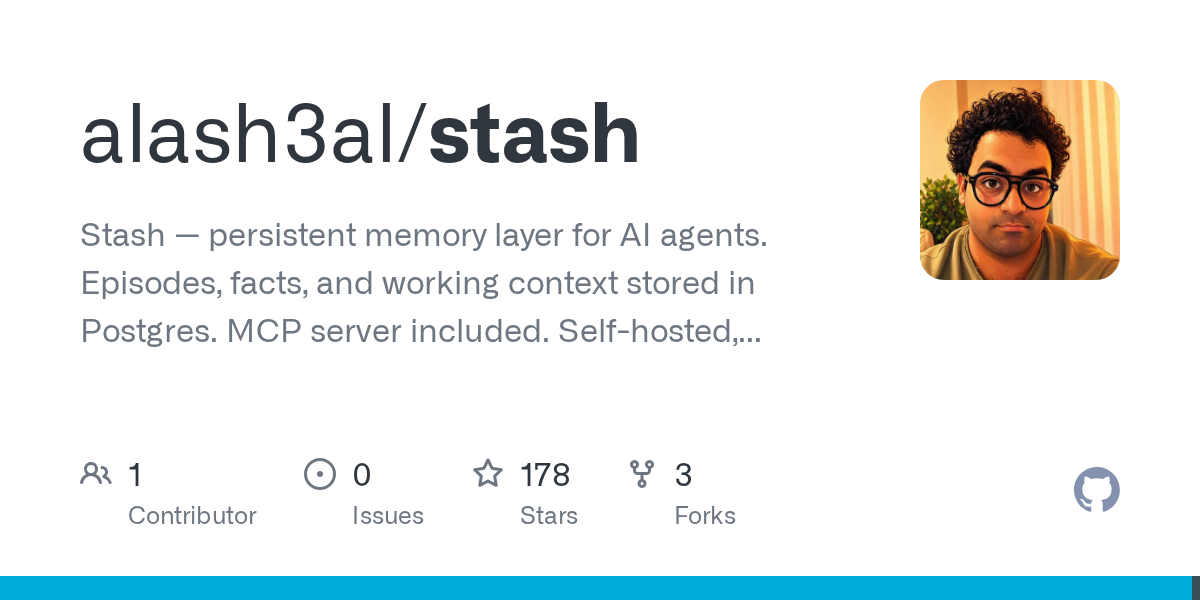

I stopped losing agent memory with Stash’s persistent cache

My LLM agent cluster kept hallucinating my router’s MAC address because its memory died on every restart. With Stash, I now keep versioned, queryable agent context across crashes—and ditched my $12/mo...

Continue Reading