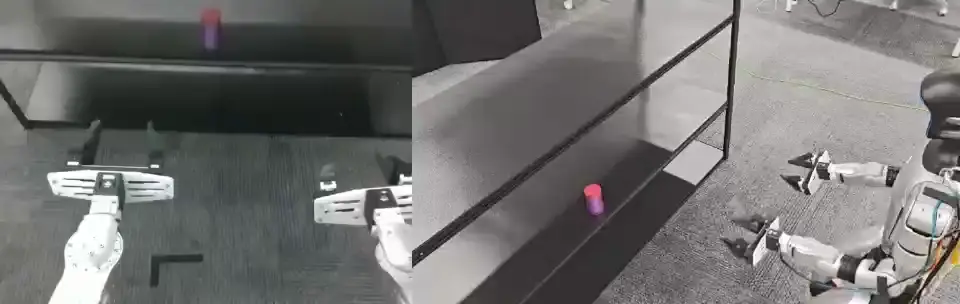

DiT4DiT is a Vision-Action-Model (VAM) framework developed for robotic manipulation tasks that require joint understanding of visual dynamics and physical action sequences. It integrates video generation models—specifically diffusion-based transformers—with flow-matching techniques to predict robot actions directly from visual input. Unlike traditional pipelines that separate perception and control, DiT4DiT unifies them into a single end-to-end model capable of generalizing across manipulation tasks without task-specific retraining. The project targets two main operational scales: tabletop setups (e.g., cup stacking, drawer opening) and full humanoid robot control (e.g., shelf organization, chair relocation). According to its README, it is the first VAM to achieve real-time whole-body control of humanoid robots—a distinction rooted in its architectural design and inference efficiency.

Core features

- Joint video-action modeling: Uses a DiT (Diffusion Transformer) backbone to generate future video frames while simultaneously predicting action trajectories via flow matching.

- Generalizable policies: A single trained model handles diverse tabletop tasks—no per-task fine-tuning required.

- Whole-body control support: Designed for Unitree G1 humanoid robots, enabling autonomous execution of complex physical workflows at 1× real-time speed.

- Modular project structure: Code is organized into clear submodules under

DiT4DiT/, with separate logic for simulation, real-robot deployment, and data handling. - Open research artifacts: Includes training scripts, evaluation metrics, and pre-rendered demo videos—though full training code for Unitree G1 tasks is still pending (listed under TODOs).

Getting it running

The repository is written in Python and hosted on GitHub at Mondo-Robotics/DiT4DiT. As of the initial release on 2026-04-15, the README outlines setup steps but does not yet provide complete installation instructions or dependency lists. There are no requirements.txt, Dockerfile, or setup.py files visible in the public repo structure described. The project structure shows a top-level DiT4DiT/ directory, suggesting that usage begins by cloning the repo and navigating into that folder. The README references both simulation and real-robot execution paths, but concrete commands (e.g., python -m DiT4DiT.simulate --task cups) are not documented in the provided context. Users should expect to rely on the arXiv paper (2603.10448) and the project page for architectural details and usage patterns until full documentation is added.

Who this is for

Researchers and engineers working at the intersection of robotics, vision-language models, and generative AI may find DiT4DiT relevant—particularly those exploring unified perception-action frameworks for manipulation. Its focus on generalization across tasks and real-time whole-body control makes it suitable for labs evaluating scalable robot policy learning, especially with Unitree G1 hardware. It is not intended for production deployment in unstructured environments: the demos shown are constrained to controlled settings (e.g., fixed camera angles, known object geometries, simulated or teleoperated initialization), and the repository lacks safety wrappers, hardware abstraction layers, or ROS integration notes. If you want a plug-and-play robot controller or a tool for rapid prototyping outside the specified hardware and task scope, this is not yet that tool.

How it compares

DiT4DiT differs from video-prediction models like PredRNN or PhyDNet by embedding action prediction directly into the generative process rather than treating it as a downstream regression head. It also diverges from behavior-cloning approaches (e.g., RT-1, Act-1) by avoiding explicit action tokenization and instead using continuous flow matching—potentially improving temporal coherence. Compared to other VAMs such as OpenVLA or RoboTwin, DiT4DiT emphasizes real-time inference on humanoid platforms and does not rely on large-scale web-scraped robot datasets. However, it is narrower in scope: it does not support multi-modal instruction following (e.g., language commands), lacks integration with foundation models like Llama or Phi-3, and has no public checkpoint releases as of the initial code drop. It is also heavier than lightweight imitation learning baselines like BC-Z or RVT, given its DiT backbone and video-generation overhead.

The repository has 224 stars and is licensed under MIT. It is actively maintained, with recent updates tied to the arXiv preprint and demo releases. While the core idea—unifying video dynamics and action prediction in a single transformer—is clearly articulated, the current codebase serves more as a research artifact than a fully documented framework. For now, it provides working demos, structural clarity, and a clear research direction—not a turnkey robot control stack. The project homepage and arXiv paper remain the most complete sources of technical detail.

Comments