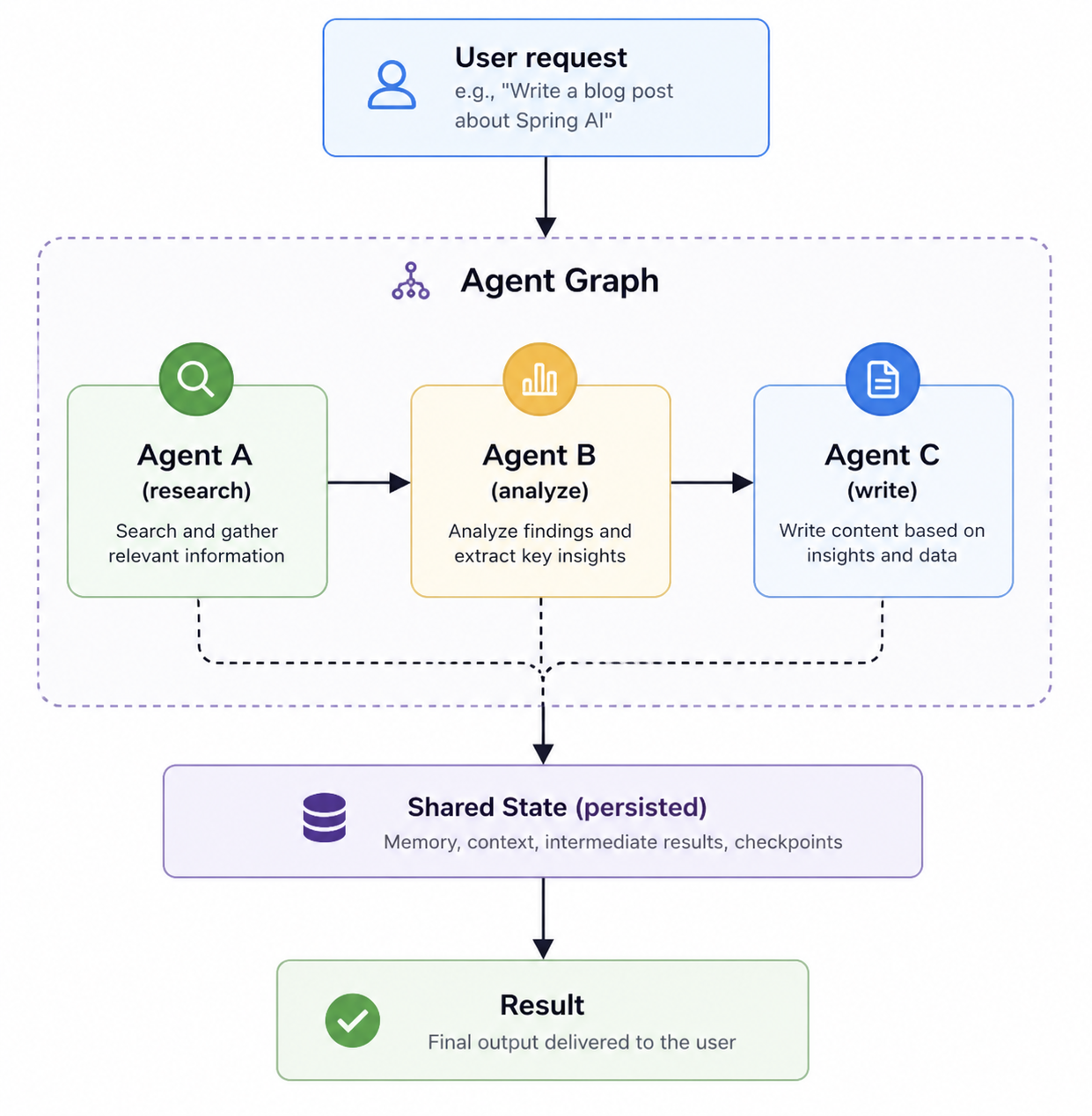

spring-agent-flow provides a framework for building stateful multi-agent workflows in Java, layered on top of Spring AI. It handles orchestration through coordinators and graphs, including retries, persistence, and shared state, without requiring custom glue code. The project addresses the gap in Spring AI by offering a runtime for multi-step, failure-prone, long-running AI systems that go beyond single LLM calls.

At its core, the framework separates concerns: ExecutorAgent instances perform tasks via chat clients with system prompts, while CoordinatorAgent routes between them using strategies like LLM-driven decisions. This setup manages state across agents, supports hybrid AI and deterministic logic, and ensures end-to-end traceability.

Core features

The project offers two APIs for control:

- Squad API: Uses

CoordinatorAgentwith a map of namedExecutorAgents and a routing strategy. Dynamic routing happens without explicit graph definitions. - Graph API: Builds explicit flows via

AgentGraph, adding nodes, edges, and conditional loops (e.g., retrying on low confidence).

Key elements include:

- Typed shared state via

AgentContext. - Checkpoints and retries for resilience.

- Persistence for long-running workflows.

- Integration with Spring AI's chat clients.

A diagram in the docs shows a coordinator dispatching tasks across a graph, maintaining state through execution.

A minimal example

The README provides a complete workflow in under 20 lines:

ExecutorAgent researcher = ExecutorAgent.builder()

.chatClient(chatClient)

.systemPrompt("Find key facts.")

.build();

ExecutorAgent writer = ExecutorAgent.builder()

.chatClient(chatClient)

.systemPrompt("Write a clear report.")

.build();

CoordinatorAgent coordinator = CoordinatorAgent.builder()

.executors(Map.of("research", researcher, "writing", writer))

.routingStrategy(RoutingStrategy.llmDriven(chatClient))

.build();

AgentResult result = coordinator.execute(

AgentContext.of("Compare Claude 4 and GPT-5"));

System.out.println(result.text());

This produces output tracing the flow: routing to research, then writing, yielding a report on model strengths. The process remains stateful across steps.

For Graph API control, add nodes and edges:

AgentGraph graph = AgentGraph.builder()

.addNode("research", researcher)

.addNode("analyze", analyzer)

.addNode("write", writer)

.addEdge("research", "analyze")

.addEdge(Edge.conditional("analyze",

ctx -> ctx.get(CONFIDENCE).doubleValue() < 0.7,

"research"));

Live demo

A Hugging Face Space demonstrates a B2B customer operations workflow: https://huggingface.co/spaces/datallmhub/multi-agent-customer-ops. It orchestrates agents in sequence—Triage, Lookup, Policy, Writer—combining AI routing with business rules. Features include typed state sharing and decision logs.

To run it locally, clone the full source from https://github.com/datallmhub/multi-agent-customer-ops. This repo uses spring-agent-flow for the complete pipeline.

Getting it running

Requires Java 17+ and Spring AI 1.0. Add the project as a dependency in a Spring Boot application (Apache 2.0 licensed). No dedicated CLI or Docker image appears in the docs; integrate it into an existing Spring AI setup.

Start with a ChatClient from Spring AI (e.g., OpenAI or others). Build agents as shown in the example above. Execute via coordinator.execute(AgentContext.of(input)) to get an AgentResult with text output and traces.

For persistence or advanced graphs, configure checkpoints in the builders—details in the repo docs. The build status shows CI via GitHub Actions.

Real-world use cases

The demo targets customer ops: incoming queries route to triage, lookup facts, apply policies, and generate responses. Agents share context like customer data, with deterministic checks (e.g., policy compliance) alongside LLM steps.

Other fits include research pipelines (fact-finding to reporting, as in the example) or analysis chains needing loops and retries. Hybrid setups mix AI decisions with rules, useful where pure LLMs fall short on reliability.

Who this is for

Java developers familiar with Spring AI will find it direct—no YAML configs or Python ports needed. It suits teams building production workflows: stateful apps with 3+ agents, where orchestration overhead slows iteration.

If you're prototyping quick LLM chats, skip it—raw Spring AI chat clients suffice. Smaller projects (1-2 steps) don't gain much from the runtime.

Comparisons to alternatives

spring-agent-flow extends Spring AI specifically, avoiding general-purpose tools like LangGraph (Python-based) or CrewAI. It keeps everything in Java, with Spring's dependency injection and type safety. No external runtime like Kubernetes needed; runs in any JVM.

The repo has 19 GitHub stars, signaling an early-stage project from datallmhub. For non-Java stacks, consider AutoGen or Haystack equivalents.

This framework streamlines agent coordination for Spring AI users; check the source at https://github.com/datallmhub/spring-agent-flow for full builder options.

Comments