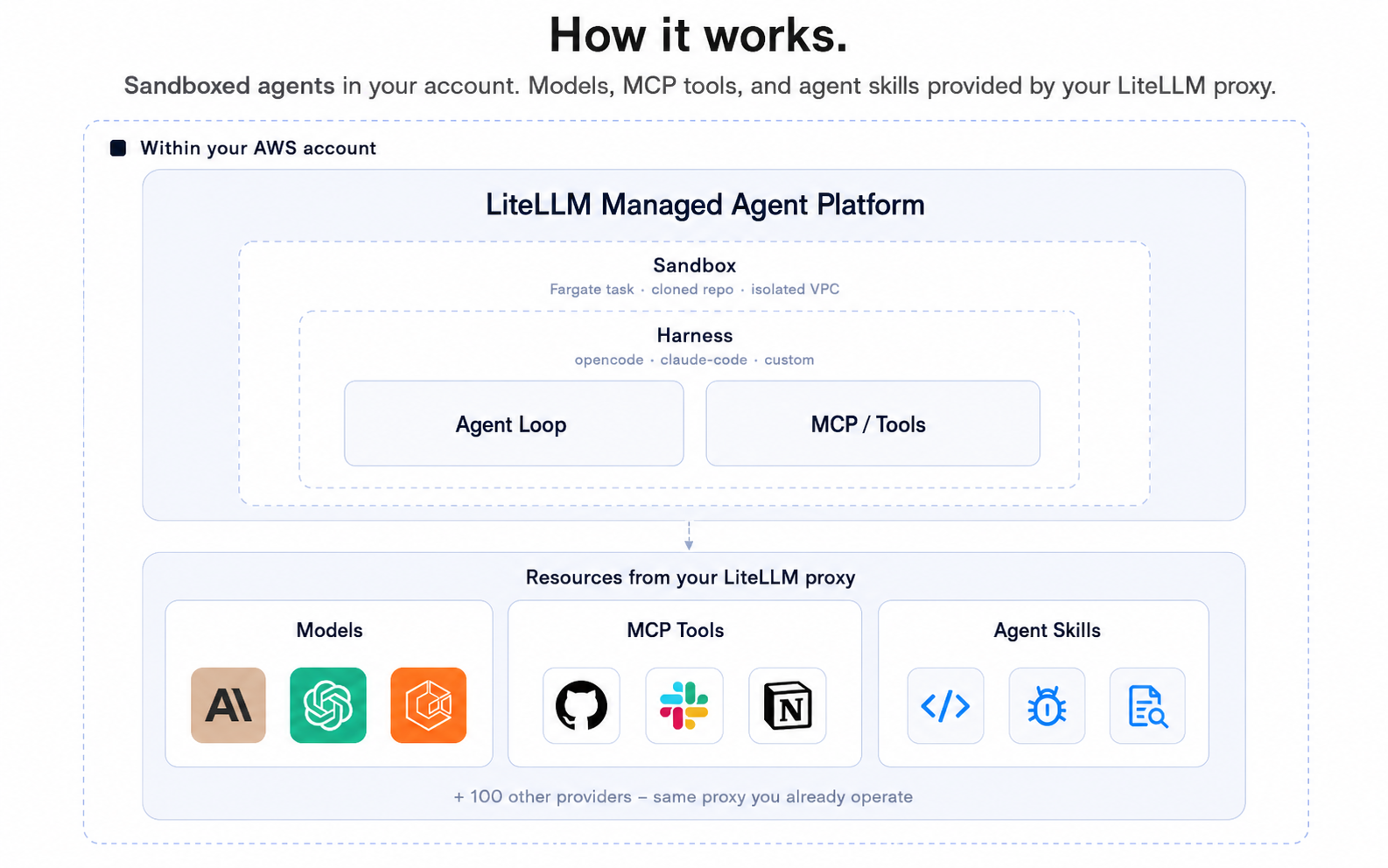

If you need a way to run multiple AI agents in production on your own infrastructure, BerriAI/litellm-agent-platform is worth a look. It's a TypeScript project that provides a self-hosted infrastructure layer for managing and deploying multiple agents. At 47 GitHub stars, it's still early-stage, but the premise is straightforward: instead of stitching together separate proxies, queues, and monitoring tools, you get a single platform designed to handle multiple agents behind your own firewall.

The project sits in the same ecosystem as LiteLLM, BerriAI's well-known LLM proxy. Where LiteLLM focuses on routing requests to various model providers, the agent platform extends that idea into multi-agent orchestration. If you're building workflows that involve several LLM-powered agents collaborating on tasks, this aims to be the infrastructure underneath.

Installing

The project is TypeScript-based, so you'll need Node.js on your system. The quickest path for most self-hosted setups is Docker. Clone the repository and build:

git clone https://github.com/BerriAI/litellm-agent-platform.git

cd litellm-agent-platform

docker build -t litellm-agent-platform .

docker run -p 4000:4000 litellm-agent-platform

If you prefer working directly with the source, install dependencies with your package manager of choice:

npm install

Then start the development server:

npm run dev

Make sure your environment has access to the LLM provider or proxy endpoint you plan to route agent requests through. The platform is designed to integrate with LiteLLM-compatible endpoints, so if you're already running LiteLLM as a gateway, the connection should be straightforward.

Basic usage

Once the platform is running, the core idea is registering agents and assigning them tasks through the platform's API. A minimal interaction looks something like this:

const response = await fetch("http://localhost:4000/api/agent/run", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({

agent_id: "support-agent",

task: "Answer the following customer query: Where is my order?",

}),

});

const result = await response.json();

console.log(result.output);

You define agents by ID, send them tasks via the API, and the platform handles execution. The self-hosted nature means all requests stay within your own network. There's no dependency on a third-party SaaS layer between your application and the LLM providers.

For managing multiple agents, the platform exposes endpoints for listing registered agents, checking their status, and routing specific tasks to specific agent IDs. This is the production-oriented piece: rather than manually managing which agent handles which job, the platform acts as the coordination layer.

Advanced bits

Multi-agent orchestration. The platform is built around the concept of running several agents simultaneously. Instead of a single chatbot endpoint, you maintain a pool of specialized agents, each with a defined role or task type. The platform routes incoming work to the appropriate agent based on the request parameters. This is particularly useful in scenarios where different parts of a pipeline require different capabilities, such as one agent handling summarization and another handling classification.

Self-hosted control plane. Everything runs on your infrastructure. For teams with data residency requirements or strict access controls, this is a meaningful distinction from managed alternatives. There's no data leaving your network, and you control the deployment lifecycle. The platform is designed to be lightweight enough to run alongside your existing services without requiring a dedicated cluster.

When to use it

This platform fits well if you need to coordinate multiple LLM-powered agents in a production environment and you want to keep everything self-hosted. It's a good fit for teams that already use LiteLLM or other LLM proxies and want a layer on top that manages agent dispatch and task routing. If your use case involves a single agent or a simple chatbot, the platform is likely more infrastructure than you need.

It's also worth noting that the project is still in its early stages. At 47 stars and a relatively new repository, the community around it is small. Documentation and supported integrations may be limited compared to more mature solutions. If you need battle-tested stability with a large plugin ecosystem, you may want to evaluate more established options first. But if you're comfortable with early-stage open-source software and want a lightweight, self-hosted multi-agent coordination layer, it's a project worth exploring.

The source is on GitHub.

Comments