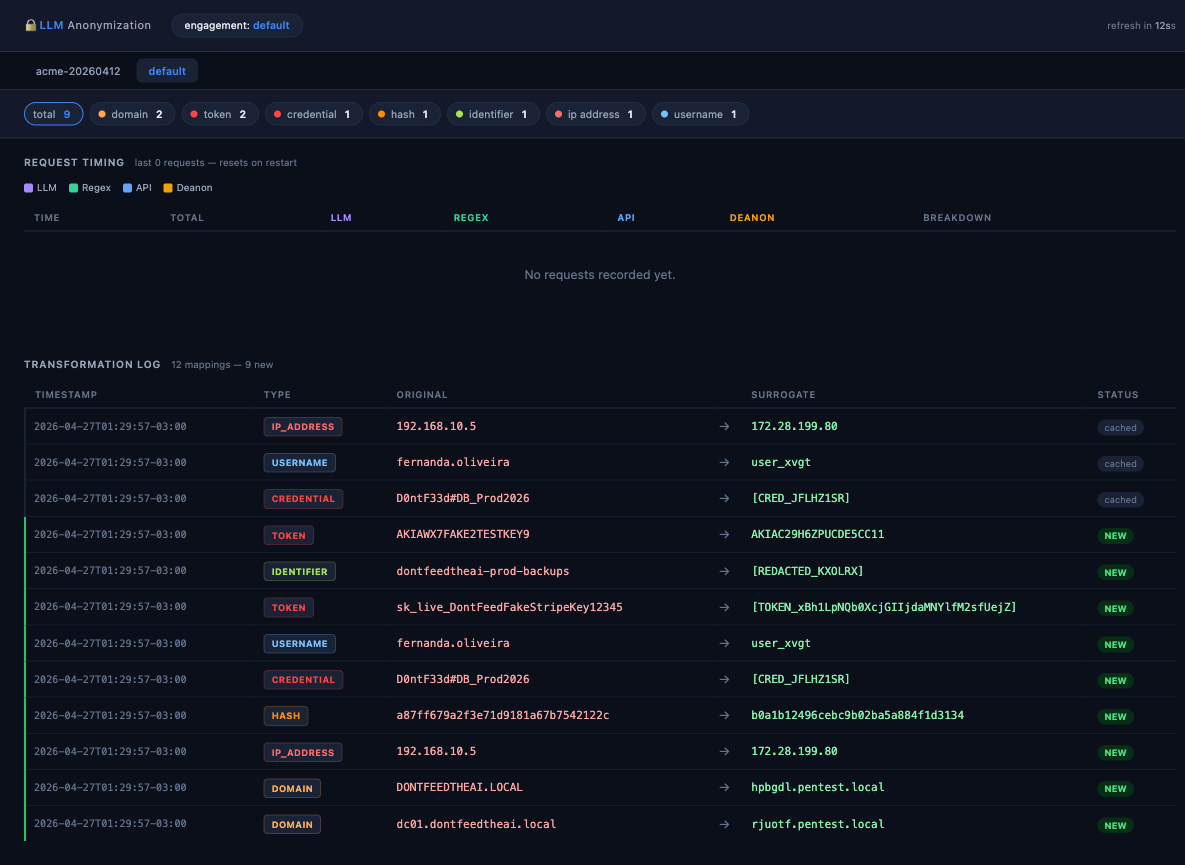

DontFeedTheAI is a Python-based reverse proxy designed to sit between a user's shell or tools and the Anthropic Claude API. It anonymizes sensitive information like IP addresses, hashes, credentials, hostnames, and personally identifiable information (PII) in requests before they reach the cloud service. On the response path, it restores the original data. This setup uses a dual-layer detection system running locally: an Ollama LLM for identifying context-aware sensitive items such as hostnames or credentials embedded in prose, backed by regex patterns for structured data like IPs, hashes, tokens, and API keys. The project, hosted at https://github.com/zeroc00I/DontFeedTheAI, has 492 GitHub stars and operates under an MIT license. It targets users handling confidential data who want Claude's reasoning capabilities without exposing real details to Anthropic's infrastructure.

The proxy maintains a per-engagement vault to track substitutions and includes a self-improving feedback loop. Sensitive processing stays on the local machine—nothing raw crosses to the cloud. For example, a command like nmap -sV dc01.acmecorp.local gets transformed: dc01.acmecorp.local becomes srv-0042.pentest.local, 10.20.0.10 turns into 203.0.113.47, and Admin@Acme2024! maps to [CRED_XK9A2B3C]. Claude sees only surrogates, processes them, and sends back responses that the proxy remaps accurately.

Core features

- Transparent proxying with FastAPI: Intercepts requests to Claude's API, applies anonymization outbound and restoration inbound without altering the workflow.

- Local dual detection: Ollama LLM spots semantic sensitive data (e.g., org names in text), while regex handles precise matches (IPs, hashes).

- Per-engagement isolation: Each session gets its own vault for substitutions, preventing cross-contamination.

- Wizard setup script:

wizard.pyguides configuration for engagement details, local paths, VPS tunneling if needed, and Ollama model selection. - Cross-platform support: Runs on Windows, macOS, and Linux with Python 3.11+ and Ollama installed.

These elements address the gap where direct Claude use logs real data, standalone Ollama lacks sensitivity detection, and cloud anonymizers introduce extra dependencies.

Getting it running

Setup requires basic prerequisites: Python 3.11+, Ollama (with a suitable model), and pip. The project uses FastAPI under the hood but exposes a simple CLI entrypoint.

Clone the repository and install dependencies:

git clone https://github.com/zeroc00I/DontFeedTheAI

cd DontFeedTheAI

pip install -r requirements.txt

Launch the interactive wizard:

python3 wizard.py

This script prompts for essentials: an engagement name (for vault isolation), local runtime path, optional VPS address for tunneling, and the Ollama model (e.g., one tuned for detection). It then deploys the proxy, opens the necessary tunnel, and activates Claude access through it. For full options:

python3 wizard.py --help

Once running, direct tools or shells to the local proxy endpoint (typically configured automatically). Outputs from nmap, mimikatz, or BloodHound ingest seamlessly. See docs/architecture.md for deeper internals on proxy flows and vault management. No Docker support is mentioned; it's pip-based for direct execution.

Who this is for

The tool fits workflows where AI reasoning on structured data risks exposure. Its README outlines specific users:

| User type | Benefit |

|---|---|

| Pentesters | Process nmap, mimikatz, or BloodHound outputs with Claude without revealing client IPs, hosts, or creds. |

| Developers & SREs | Debug production configs or data in regulated setups, stripping internal details. |

| Legal & consulting | Review anonymized contracts, case files, or IP via AI without client data leaks. |

| Finance & compliance | Analyze audit scripts or reports, hiding account info. |

| Researchers | Query LLMs on private datasets safely. |

If your daily scans or analyses involve live pentest environments, client NDAs, or compliance audits, this proxy layers protection over Claude without workflow changes.

How it compares

Direct alternatives fall short in key ways, as diagrammed in the README.

Cloud anonymization APIs paired with Claude require two services: data hits the anonymizer first (incurring a separate bill and trust), then Claude. Sensitive content still leaves the local machine.

Ollama standalone keeps data local but processes raw inputs—no built-in filtering means it reasons directly on real IPs or credentials.

Claude alone delivers top reasoning but forwards everything to Anthropic's logs, vulnerable to policy shifts or breaches.

DontFeedTheAI combines Claude's cloud power with Ollama's local detection: data structure and intent reach the API as surrogates, with zero sensitive outbound transmission. Mermaid flowcharts in the README visualize this:

flowchart LR

s3["🖥️ Your Shell\nreal data"] --> p["🛡️ DontFeedTheAI"]

o2["🧠 Ollama\nlocal detector\nnever leaves machine"] --> p

p --> c2["☁️ Anthropic API\nsees only surrogates"]

No second bill or third party. Drawbacks include dependency on Ollama (needing a decent local model and hardware) and manual wizard runs per engagement.

Vault management and limits

Each engagement creates an isolated vault for mappings, enabling the feedback loop where false positives refine detection over time. Regex covers rote patterns reliably, while Ollama handles nuanced prose. It's not a full data loss prevention (DLP) suite—detection relies on these two layers, so edge cases in obfuscated text might slip. Users without Claude subscriptions or local GPU for Ollama won't benefit. For full details, check the GitHub repo at https://github.com/zeroc00I/DontFeedTheAI.

Comments